What Changed Recently (Exact Dates)

- OSHA opened 2025 ITA submissions on January 2, 2026, with the main due date on March 2, 2026.

- OSHA issued a letter of interpretation on lithium-ion battery hazards on February 9, 2026.

- BLS published 2024 fatal work injury data on February 19, 2026, reporting 5,070 fatalities.

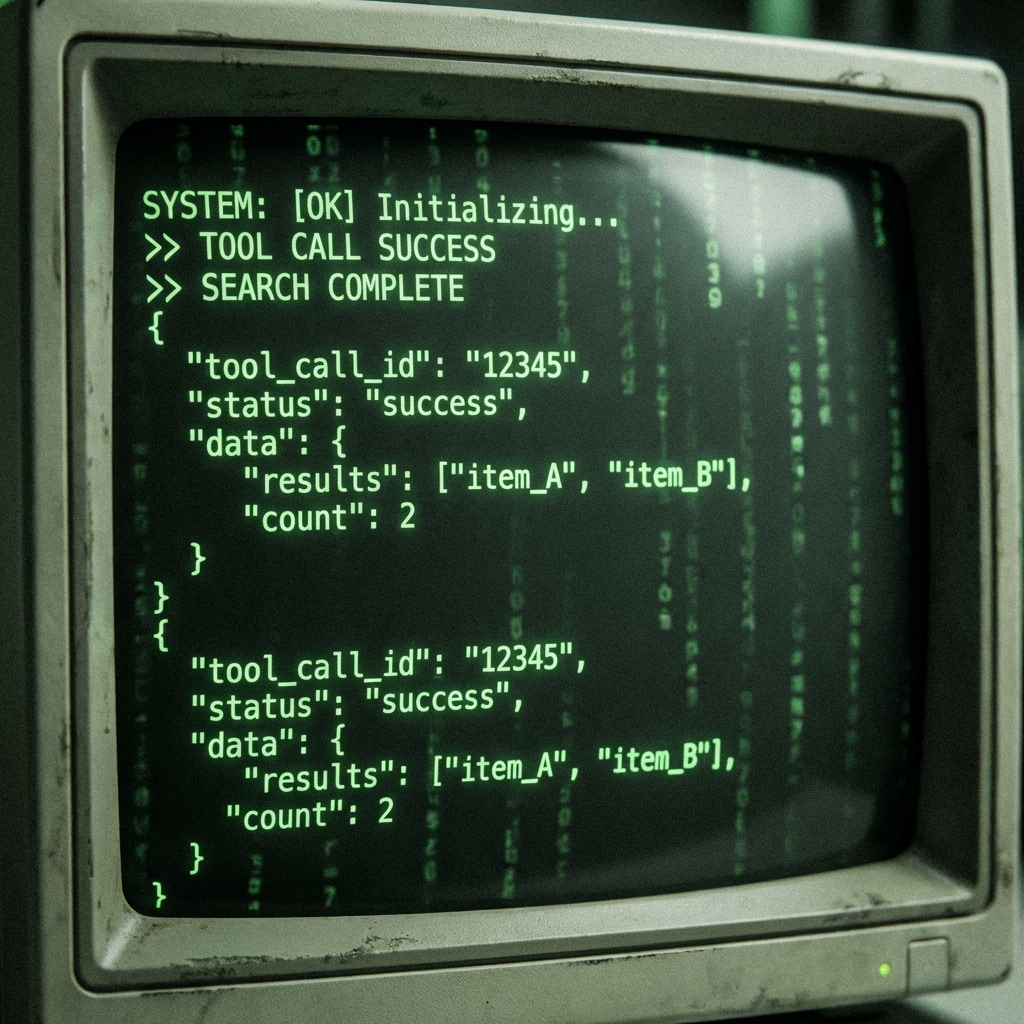

The Error Codes That Exposed My Data Problems

My first pass looked fine on screen, then failed in validation. These were the exact log lines that sent me back to root cause.

[ITA_CHECK] E_DATE_042: expected YYYY-MM-DD, got 02/31/2025 [ITA_CHECK] E_CASE_107: case_status value 'restrictedduty' not in enum [SYNC] HTTP 400 Bad Request: establishment_naics is required [QA] WARN_DUP_009: incident_id repeated in shift handover log

Personal Experience #1: One Typo, Four Hours Lost

Pro Tip: If your incident count matches but category totals do not, check enum normalization before checking formulas.

My 7-Step Debug Sequence

- Freeze edits and export one immutable snapshot.

- Validate date format, enum values, and required IDs first.

- Run duplicate checks across shift, medical, and supervisor logs.

- Cross-check heat cases against your Heat Stress Scheduler records.

- Reconcile confined-space incidents with your Gas Monitor Log.

- Compare record totals with your ITA recovery checklist.

- Submit once, then archive the correction trail as evidence.

| Debug approach | Typical time per issue | Failure pattern | Audit confidence |

|---|---|---|---|

| Ad-hoc spreadsheet edits | 15-20 min | New errors introduced during fixes | Low |

| Manual checklist only | 8-12 min | Missed duplicates across sources | Medium |

| Web Ocean structured log flow | 3-5 min | Low with locked formats and timestamps | High |

Personal Experience #2: Date Parsing Was the Real Culprit

Pro Tip: Build one pre-submit validator script and run it locally before every upload window.

The Patch That Stopped Repeat Failures

I added a guardrail layer before export. It blocks bad rows instead of passing them downstream.

function normalizeCase(row: CaseRow): CaseRow {

return {

...row,

incident_date: toISODate(row.incident_date),

case_status: normalizeEnum(row.case_status),

establishment_naics: row.establishment_naics?.trim() || 'UNKNOWN',

};

}Personal Experience #3: The Best Fix Was Boring

My Opinion After Doing This Repeatedly

Safety data quality is not a software problem alone. It is an operations discipline. If teams log events late or loosely, no dashboard can save the final export.

What works is a simple loop: structured inputs, fast validation, and one owner for final signoff.

Need cleaner safety logs this week?

Start with one standardized workflow, then share your hardest validation error in the comments.

Build My Clean Log FlowMeta Description (140 chars): Use this 7-step safety data QA flow to catch export errors fast, clean your logs, and face OSHA review week with evidence ready for signoff.